Prediction of Patient Deterioration

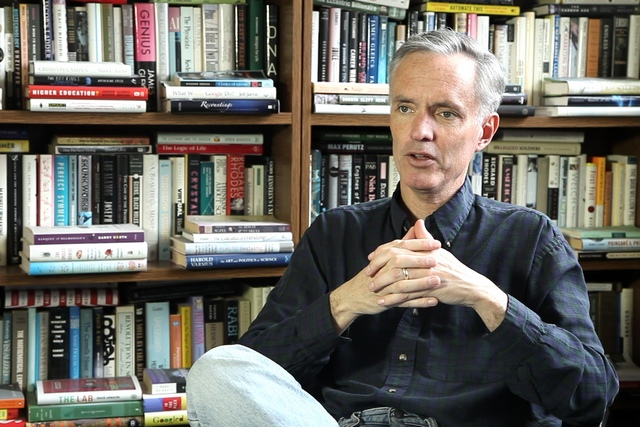

Biomedical Engineer Thomas Heldt on preventive patient care, patterns of disease progression, and quantifying an individual’s health

videos | September 18, 2015

What is reactive patient care paradigm? How can algorithms predicting the disease progression be created? What are the challenges to developing such algorithms? These and other questions are answered by Assistant Professor of Electrical and Biomedical Engineering, EECS Department at Massachusetts Institute of Technology Thomas Heldt.

The physiological state of the patient might actually deteriorate suddenly. And that’s when clinicians need to take swift action to save the patient. This could be a sudden heart attack, it could be a problem breathing, it could be simply the heart stopped beating. In all of these cases, the clinicians have to take swift action to save the patient. Some of these events occur suddenly without much prior indication that the patient’s is actually doing worse. Sometimes you actually do have indication from the measured signals that the patient is slowly deteriorating. But the general paradigm right now is that the crisis actually happens, and then the clinician responds. I mean, all the clinicians actually do their best to avert the crisis in the first place. Sometimes they can’t do so, the crisis happens, and the clinicians actually rush to the patient’s bedside, and try their best to save the patient. This I would call a reactive patient care paradigm, as here you actually react to something going wrong with the patient. And you react very forcefully and very swiftly, and therefore you can actually save the patient.

We’re collecting a lot of data in critical care units, and the question that can naturally be asked is, with all of these data available, could we actually predict, which patient might be in imminent danger of having a deterioration of state, of the physiological state, or which patient is actually doing well.

And that would actually allow clinicians to intervene much earlier and before the crisis actually happens. One of the central research questions in this field is can we actually take all of these data that’s being collected at the patient’s bedside in real time and process it to give the clinician indication of what the state of the patient might be one hour, two hours, five hours or six hours out. So we could actually move from a patient care paradigm that is reactive, one that’s reacting to a crisis, to one that’s actually predictive, where with a reasonable degree of certainty we could actually tell the clinician, which patient is in imminent danger of physiologic decompensation or deterioration and which patient might actually be stable and might be so for the next 4-6-8-10 hours. That would allow the clinician to intervene earlier in those patients who are at a greater risk of deterioration or simply to increase surveillance, to actually keep a closer look on those patients who might be identified as being unstable or predicted to deteriorate versus those who are actually quite stable and might not need further surveillance beyond what is already being provided.

So the challenges here are to collect a large number of data and to learn from these data, to actually look at the particular event, have a large database that has a sufficient number of events, whatever this event might be, of deterioration events and then to actually look backwards from these deterioration events and understand whether there are signatures in the multi-parameter data streams that we collect at the bedside of the patient. And to see whether we can actually develop algorithms that identify these patterns and then deploy these algorithms at the bedside and to see whether we can correctly identify patients ahead of time that might be at risk of decompensation or deterioration. Obviously, we have to make sure that the algorithms and the alarms that we issue are very specific, meaning that we don’t flag unnecessarily patients who are not at risk of deterioration. But that can actually be determined through an adequate design of experiments that one would have to conduct in order to actually deploy these algorithms.

The problems in this research area right now is really building large enough databases and rich enough databases of patients who experience events in order to apply these kinds of machine learning algorithms. We are involved here at MIT with a developing a large database of patients in critical care, it’s called a MIMIC-II database And the database, I think, now has about 50000 patients, about 5 to 10 thousand of which have high resolution bedside monitoring data available. So one central challenge is to build a database on which you can actually train algorithms and in which you can actually test algorithms before you deploy these algorithms in a clinical environment.

Another challenge is actually to identify patterns, patterns of disease progression or patterns that might precede a clinical deterioration, and to see whether these patterns were actually consistent across patients.

Each patient has his or her own pattern then it’s very difficult for an algorithm to actually identify these patterns across large enough patient population so that these algorithms might actually make a difference.

So pattern identification is a big challenge to see whether there are actually consistent patterns across patients and to identify these patterns. Once we have these patterns identified we can actually program computers to recognize them, and then these computers can actually be deployed in clinical settings, and we can actually evaluate their performance. But it’s not a priori clear what these patterns might be. So there is a big step that actually has to do with identifying patterns of patient deterioration.

And then we have to have access to a clinical environment where we can actually test the algorithms and most often this is done in collaborating sites that are willing to actually put these algorithms at the patient’s bedside. And first it’s done in a silent mode, which means that the alarm that’s being issued is not shown to the clinicianы. It’s just to evaluate the algorithm and to see whether the algorithm actually identifies the correct patients and does not identify those patients who might not have the disease or who might not be at risk of physiological decompensation. Obviously, there will always be some false positives and there will always be some false negatives that means there will always be some patients that you incorrectly flag as being at risk of deterioration and then there are some patients that might deteriorate who you didn’t flag. And it’s exactly these kind of performance matrix that need to be identified, specified and evaluated in order to figure out whether a particular algorithm is a valid clinical tool, that should be deployed in a clinical setting.

So looking forward over the next 10 or 20 years the trend is to more and more measurements, more and more signals that can be obtained less and less invasively from not only patients who are in specialized environments, like the critical care unit or the operating room, but there is a big area of wearable monitors, wearable devices that can monitor your physiological state. So the big prospect over the next 10 or 20 years is to actually take all of these measurements and to develop algorithms that allow us to quantify the state of your health. And to allow not only quantify the state of your health, but also to allow us to predict what might actually be happening to your physiological systems ahead of time. So that we can intervene or that the clinicians can intervene before a major health crisis actually takes place. And that is really, I think, one of the big opportunities of all of the data that’s currently being collected in hospital environments and that we start to collect from patients or even healthy subjects, healthy people through wearable monitoring technology. To use all of these data to actually define the health of the patient and to predict what might actually be happening to the patient over the next few hours.