Newborn Face Perception Simulated

Parents can finally find out how their newborn children see them

news | July 7, 2015

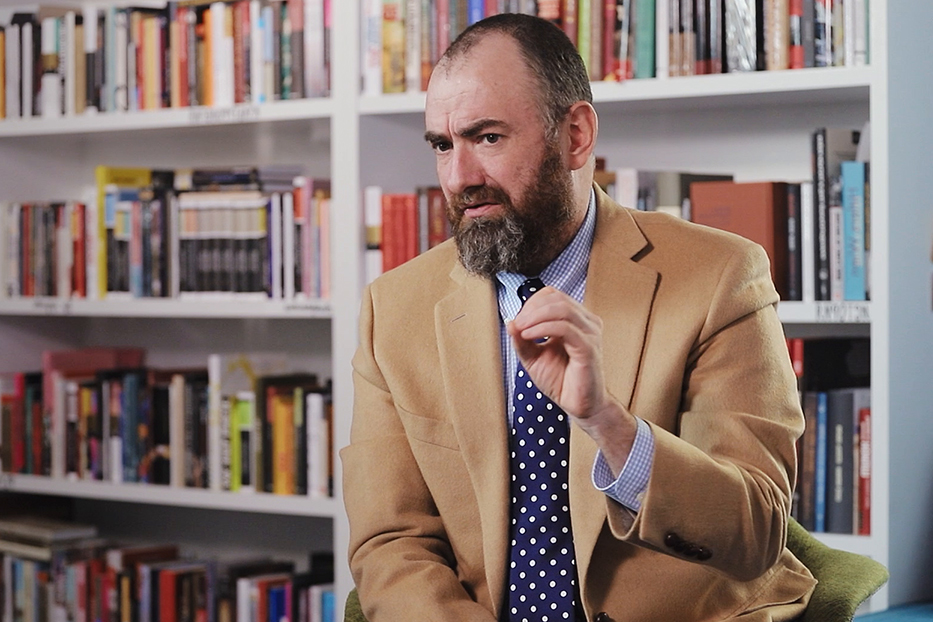

On November 2014, Journal of Vision published a paper titled “Simulating newborn face perception” describing the experiment focused on facial expression recognition with newborns. We have asked one of the authors of this research, Dr. Olov von Hofsten, to comment on this work.

The Study

The goal of this research was to test a hypothesis on newborn perception of facial expressions. The idea was that even if the visual information is limited, emotional expressions are easier to read when expressed dynamically. But in order achieve this, a method of simulating newborn vision was needed. What a newborn can see is something most parents ask themselves, and although we know a lot about newborn vision, no serious attempt has previously been made to simulate images corresponding to it.

For the simulation of images with the correct reduction in contrast and resolution some type of measure of newborn visual perception was necessary. One such quality of newborn vision that can be measured, and has been done so extensively, is the contrast sensitivity. This is a measure of what contrast is needed to separate a specific line width of evenly spaced lines from a plain grey field. We developed a mathematical model which combines this data to a transfer function that turns a real image into the one perceived by a newborn. One assumption was used: the reduction in contrast is the same for all contrast levels, high and low, at a specific spatial frequency.

The results showed that a happy expression is easier to recognize than other expressions, and that at 30 cm most expressions can be identified. At 60 cm, however, the task is much more difficult, and at 120 cm the subjects were nearly giving random guesses. An exemption from this was the angry expression, which was more easily identified at 120 cm than at 60 cm. No conclusion of this outlier was found, but it could depend on what areas of the face changed in this specific expression. Nonetheless, the study lets adult subjects interpret the images, which is something that is much more developed in adults than in newborns. Also, the simulations do not take into account the accommodation of the eye (or focusing) which is fixed in newborns and will therefore degrade images at 60 and 120 cm further since the data is based on 40 cm distance. Anyway, the idea was that if an adult human subject could make out what was presented, a newborn child could, in principle, do it too. However, if an adult could not make out what was shown, a newborn surely could not do so either.

Background

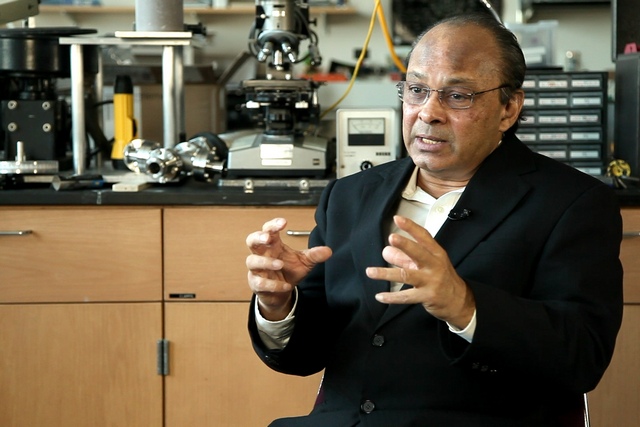

The study is a combination of competences coming together. The research group in Oslo, headed by Prof. Svein Magnusson, specializes in visual information processing in cognitive neuroscience and performed the experiments with the adult subjects. Prof. Claes von Hofsten has a profound knowledge of infant vision and development acquired over a lifetime, and I did my phD studies on simulating images for microscopy. Both Prof. von Hofsten and Prof. Magnussen had long discussed the possibility to simulate images with newborn visual information, but it was not possible until I could contribute with my expertise in optics that the idea was made into reality.

Future Direction

Since the method of calculating the transfer function is new, it needs to be studied more and verified by experiments. A verification could easily be done by introducing a controlled aberration and comparing results with simulations. If the method is verified and further developed, e.g. taking into account the accommodation of the eye, there are endless possibilities that can be explored by using it. A transfer function of the eye and brain is very difficult to measure, but the contrast sensitivity function is fairly straightforward to record. This can open up for insights into the vision not only of newborns, but maybe even of animals and people with disabilities. This will, in turn, help in the development of visual aids and cues as well as help our understanding.

If you would like to contribute your own research, please contact us at [email protected]