Plasticity in Speech Perception

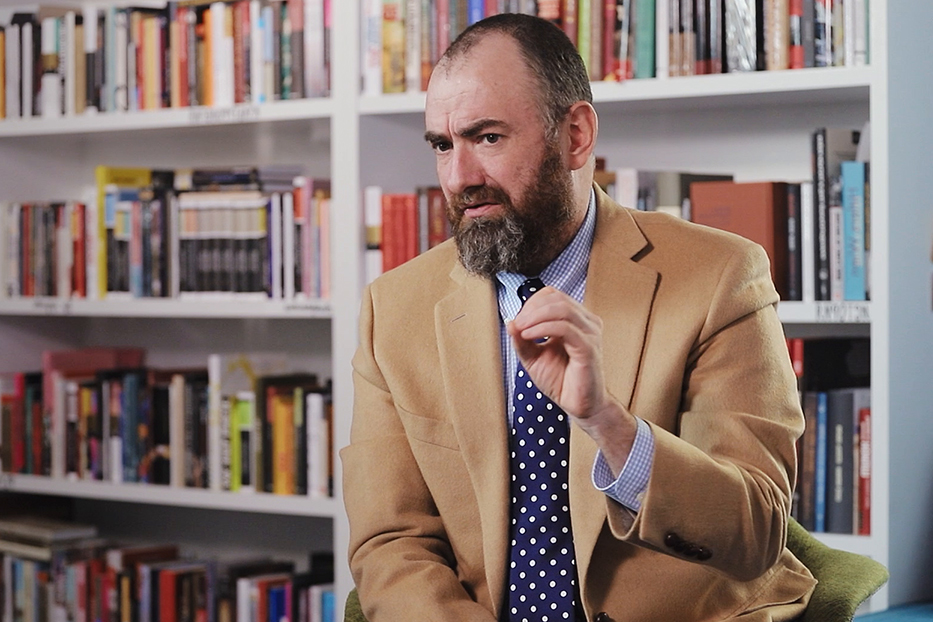

Neuroscientist Sophie Scott on how people speak the same language differently, adaptation to the new sounds, and the impact of social factors on sound processing

videos | July 3, 2018

There are some really interesting differences in how the brain processes visual information and auditory information. Auditory information doesn’t hang around – a sound is gone, whereas a lot of visual information in your world actually stays there, move about a bit, but doesn’t go anywhere. You only hear a sound because something happened – sound comes from objects happening and actions happening for those objects, and then they’re gone again. If something in my world visually can be there, as there’s light, I’ll know it’s there. So, you’ve got these differences.

Another really interesting difference, which links into the plasticity of how a brain deals with this information. In the visual system, what you can find in lots of mammal brains are visual cells, single cells that care, for example, about lines, which are different orientations. So, the horizontal line is of this orientation, and not that orientation. What you don’t find in the auditory system is anything that fixed. You can sort of see in visual processing the building up of features, lines, shapes that can reconstruct your visual world based on these basic features like a pallet of things to look for.

What that means might be because sounds are transient, you have to process them quickly, and they can be, for example, really affected by the environments that you’re in. My voice sounds different in this room than it would do if I was standing outside in noisy traffic. It’s also a case that if we look at some communication sounds, it turns out the kind of plasticity, which you get from this kind of very heterogeneous response in the brain, actually, helps you deal with a signal that can be highly variable. There are different sorts of ways. That’s important in the human brain.

The first, the most obvious one, is we all talk differently. So, you go out into the world and you might meet lots and lots of people, who speak the same language as you, and they’re talking the same language as you, but they are almost incredibly unlikely to actually do so in the same way. People speak differently because of their regional accent, because of their mannerisms, because they have idiosyncrasies in their particular production.

For example, my son goes to school in central London, near UCL, where I’m based, and there’s a speech sound in English, the speech sounding [like] or [bike], the vowel goes [i], [like], [bike], and it’s just going from London accents of English. Children say [lack], [back], [rad]. When you first hear you go with “What?” and the next thing is you are just processing it, you just deal with it. It happens all the time. You need to be very plastic in your response to the speech, because you’ve got to be able to deal with somebody saying the word [like] like [lack] or like [laick], all these different ways that people who speak the same language as you might actually produce it. You’ve got this speaker-to-speaker variation, and plasticity helps you deal with that, helps you just deal with the fact that there is this huge variation out there in how people actually produce the same language. The same words technically can be said quite differently.

The other situation where you find this is if you have problems with your hearing. If you lose your hearing, get a hearing aid, or get fitted with a cochlear implant, what you might have to do is start to understand the language that you normally speak. It now sounds completely different. In cochlear implant instead of a voice that you’re hearing now, you hear something that sounds a lot more like a whisper. People learn to adapt to that incredibly quickly, and, indeed, if they are learned to that when they are children, they can do amazing things for these cochlear implants. They can hear music, they can hear all sorts of stuff that technically they probably shouldn’t be able to do. So, actually the plasticity that’s built into the system both helps us deal with day-to-day variation, which is always there, encountering completely novel ways of saying language that you’re familiar with, and it can also help you if you have to deal with change, if you have to deal with a completely altered sounding input.

It’s also the case that we just don’t know what this does to your brain. We just don’t know how this is actually implemented. I wouldn’t be surprised if being able to cope with novel sounding people, if there aren’t accent differences, there will be individual differences that hide the location there, idiosyncratic – they are specific to one person. If they’re speaking the same language to you, you will tune into that. I think it would be very interesting to know more about how this works across different languages. Historically what’s tended to happen is this largely gets studied if not in English, in Germanic languages. So, there’s even not much work in French in terms of the brain systems involved in decoding and coping with different voices. I think it’s one of the areas where we really need to take a step back and start doing more cross-cultural work at a brain level, to start to get a sense of how different languages might be affected by this. The sort of social and economic, and geographical stuff that feeds into the accent differences – all lack of them.

When you think about people talking, we can study it by understanding brains and thinking about brains, but we can never forget about the social dimension, because it’s always relevant and it’s always important, and it is going to be influencing how the brain is processing that signal. For example, there was some very nice work from Holland. They just played people stories. The stories had been modulated such that the sound [sh] and the sound [s] got mixed together. They didn’t tell people: “Work out what’s going on here”. But what people did was they immediately adapted, they just listen to the sound, they adapted their perception of the difference between [sh] and [s]. If you then tested them to tell the difference in sounds, it had actually shifted but only for that talker. So, there’s a human being behind the changes that their brain has made. They haven’t mapped the whole phonetic system, they’ve just done it for that one person, so you can’t really separate out the social aspect from those nitty-gritty cognitive brain level changes.

I suspect, at its heart the plasticity that we see in the auditory system probably relates to the requirements of dealing with sound. The sound is strange. It can vary a lot. If you’re in a very noisy room and you’re trying to make out what your friend is saying, you’ve got a very different kind of acoustic task there than if you are dealing with that same friend on the phone or talking to you in a quiet room. So, the same task you’ve made completely different by the acoustic environment that you’re in. Because sounds go, you’ve got to deal with them very quickly, but they don’t hang around for you to inspect and pay more attention to you, they’ve already gone.

In the field, one of the things that we are interested in is how we can better understand this plasticity. So, one of the things that we found is that the differences between people, who can adapt very well to very different sounding speech, and people, who adapt a lot less, wasn’t associated with differences in auditory parts of the brain. It has to do with higher-order parts of the brain, that seemed to be as an influence backward, almost on to how the auditory system was engaging. That was very interesting, but it does speak to sort of tension: are you looking at perceptual systems and their adaptation – that might vary across people, or are we looking at higher order – it may be linguistic systems that guiding that. There’s a real tension there, because some people feel very strongly – it’s got to be all driven by a sort of a perceptual inwards process.

There’s not the scope for top-down chameleon range people. But it’s also the case that we know, for example, that there are continuous loops of processing between cortex and subcortical fields that are really important in auditory processing. Actually, maybe, it’s not really meaningful to talk about bottom-up and top-down, maybe, everything we’re seeing even the bottom up stuff actually do reflect him as continuous looping of processing of the auditory signal between cortex and the ascending order to pathway, which does a huge amount of a complex processing on sound. I think one of the things we’re going to have to tackle is the inherent complexity of that, and, actually, understanding some of these cortical loops. It means that we can’t just draw an arrow going ear up to the brain. You’re seeing these continuous looping, even before you start to engage other brain areas influencing this. We have to engage properly with the complexity. I think that’s going to start telling us more about how this plasticity works and how, for example, it might vary across people.